In 2026, accurate web scraping is less about writing a clever script and more about looking like a normal user in the eyes of anti-bot systems. Residential proxies have become the core infrastructure for serious data collection, especially when scraping e-commerce, travel, local SEO, and social platforms. Tools like ResidentialProxy.io make this practical for everyday use, but the reasons they improve data quality are often misunderstood.

This article explains, in practical terms, how residential proxies help avoid blocks, reduce noise in your datasets, and increase the reliability of the information you collect—based on real-world scraping workflows that run at scale in 2026.

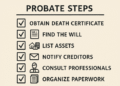

Why Scraping Accuracy Is Harder Than Ever in 2026

Websites in 2026 use increasingly sophisticated defenses to protect content, pricing, and internal APIs. Accuracy isn’t just about getting some data—it’s about consistently getting the right data:

- Dynamic pricing and A/B tests show different content depending on location, device, and history.

- Strict rate limiting bans IPs that scrape “too fast” or behave non-humanly.

- Bot detection systems (e.g., behavior analysis, browser fingerprinting, CAPTCHAs) actively profile connections from known data centers.

- Geo-specific content means what your script sees from one country can be very different from what real users see elsewhere.

All of this leads to classic accuracy problems: incomplete pages, missing products, altered prices, or full HTML that silently hides key elements. Data-centers IPs alone increasingly produce a biased and noisy dataset.

What Makes Residential Proxies Different?

A residential proxy routes your traffic through IP addresses assigned to real households by consumer ISPs, not through cloud providers. To target a website, your scraper connects via the residential network and appears to be a typical home user.

Compared to data-center proxies, residential IPs provide three critical advantages for accuracy:

- They blend in with normal user traffic.

Anti-bot tools are trained to distrust clusters of cloud IP ranges. Residential IPs, especially from providers such as ResidentialProxy.io, sit in networks that resemble real customers, so they trigger fewer heuristic alarms. - They offer real-world geographic distribution.

Because they are backed by genuine ISP ranges, you get accurate local content that mirrors what human users see in each region. - They have better reputation profiles.

Residential IPs typically have fewer historical abuse markers than shared data-center blocks that have been hammered by bots for years.

How Residential Proxies Improve Web Scraping Accuracy

Accuracy in scraping can be broken down into four major dimensions: completeness, consistency, fidelity to the real user view, and stability over time. Residential proxies impact each dimension in specific, practical ways.

1. Fewer Blocks = More Complete Data

Every HTTP 403, invisible CAPTCHA, or half-rendered page is effectively a missing data point. Over a large crawl, these gaps introduce silent bias. Residential proxies dramatically reduce this loss-rate.

With ResidentialProxy.io and similar services, you can:

- Rotate IPs per request or per session, spreading load across many home IPs.

- Avoid crowded bad neighborhoods (overused data center subnets with poor reputation).

- Respect rate limits at the IP level, mimicking human visit patterns more closely.

The practical effect is simple: more of your scheduled requests return a full, valid page, so your dataset is more complete and less skewed by missing records.

2. Accurate Geo-Localized Results

Geography now changes almost everything: search rankings, prices, stock availability, shipping options, even entire product catalogs. Scraping from a single country and extrapolating globally can badly distort analytics.

Residential proxies let you:

- Pin traffic to specific countries, regions, or cities and see exactly what a user there sees.

- Compare results across markets (e.g., prices in Germany vs. USA vs. Brazil) with true locale-based responses.

- Collect localized SERPs and content for SEO tracking, local search, and competitor monitoring.

Real-world example: an e-commerce monitor that previously showed “availability: in stock” from a US data-center IP, while EU customers saw “out of stock.” Switching to city-targeted residential proxies uncovered these discrepancies, improving inventory and pricing intelligence.

3. Less Bot Detection Noise and Fewer CAPTCHAs

High-accuracy scraping is not just about avoiding outright blocks. Partial defenses—like injecting extra CAPTCHAs or throttling responses—subtly degrade your data. Some pages return different content when they suspect automated access.

Residential networks look and behave more like genuine user traffic, so your scraper:

- Hits fewer CAPTCHA walls, which means fewer failed or delayed requests.

- Receives less obfuscated HTML or stripped-down versions of pages.

- Is less likely to be shuffled into “bot experiences” that show truncated or altered content.

This translates into cleaner, more representative HTML for parsing—critical for machine learning pipelines and downstream analytics that assume a stable page structure.

4. Stable Sessions for Logged-In or Stateful Flows

Some of the most valuable data sits behind login flows: dashboards, B2B platforms, internal tools, or personalized offers. Here, stability matters more than raw concurrency.

Residential proxies that support session persistence (keeping the same IP for a defined time) help you:

- Maintain long-lived sessions for authenticated scraping.

- Avoid suspicious IP hopping during a single user journey.

- Reduce session resets and forced logouts caused by “unusual activity.”

In practice, a stable session with a clean residential IP leads to fewer mid-flow failures and more complete transaction traces—vital when monitoring complex funnels or pricing logic.

5. Cleaner HTML for Parsing and Fewer Edge Cases

When a site is unhappy with your traffic, it might still return HTTP 200 but serve:

- Different HTML structure.

- Empty or placeholder data sections.

- Injected interstitials, banners, or JavaScript traps.

Residential proxies reduce the frequency of these “non-standard” responses, which means:

- Fewer parser failures and less brittle scraping logic.

- Less post-processing work to filter anomaly pages.

- Higher signal-to-noise ratio in your final datasets.

Over hundreds of thousands of pages, this stability is the difference between an ETL that runs quietly every day and one that constantly breaks on new edge cases.

Using ResidentialProxy.io in Everyday Scraping Workflows

ResidentialProxy.io is designed for practical, day-to-day scraping use. While the exact details of implementation will vary between teams, several patterns have emerged as effective for improving accuracy.

Rotating IPs Intelligently

Rather than rotating IPs randomly at maximum speed, high-accuracy setups:

- Use one IP per logical user session (login, search, browse, checkout).

- Rotate between sessions to distribute load and avoid rate limiting.

- Throttle concurrency by target so each domain sees traffic that looks human-scale.

ResidentialProxy.io’s rotation settings allow you to choose how often IPs change, balancing stability and stealth.

Country and City Targeting for Realistic Views

For SEO, competitive intelligence, and pricing research, teams typically:

- Define per-market scraping jobs (e.g., US, UK, FR, DE, BR, AU).

- Assign specific geo-targeted residential pools to each job.

- Compare differences in content, rankings, and prices across these geographies.

Because the IPs are true residential endpoints, what you collect accurately mirrors customer experiences in those regions.

Session Persistence for Complex User Flows

Workflows that require multiple steps—logins, paginated dashboards, filter changes—benefit from sticky sessions. Typical patterns include:

- Use a sticky residential IP for the full lifetime of a workflow (e.g., 5–30 minutes).

- Store session cookies and reuse them with the same proxy endpoint.

- Rotate to a fresh IP after the workflow ends or after a defined number of requests.

This reduces suspicious context changes (like IP swaps mid-session) and leads to higher completion rates for complex scraping tasks.

Real-World Impact on Data Quality

From day-to-day experience running scrapers on residential networks, several quality improvements are consistently observed when switching from pure data-center setups:

- Higher valid response rate: more HTTP 200s with intact, expected HTML structures.

- Lower anomaly rate: fewer pages flagged as “invalid” due to CAPTCHAs, login prompts, or truncated content.

- Reduced bias: data that more closely reflects the diversity of real-world user experiences across locations.

- Smoother operations: fewer emergency fixes, less need to constantly adjust for new anti-bot behaviors.

On the analytics side, this leads to more reliable dashboards, more trustworthy machine learning features, and greater confidence in decisions driven by scraped data.

Best Practices for Accurate and Responsible Use in 2026

Residential proxies are powerful, and with that power comes responsibility. To maximize accuracy while staying compliant and respectful of targets:

- Follow the law and site policies: respect robots.txt where appropriate, terms of service, and relevant data-protection regulations.

- Throttle requests: mimic realistic human patterns instead of hammering endpoints.

- Avoid sensitive targets: do not scrape login-protected personal data without clear legal grounds and permission.

- Monitor error trends: watch for rising block or CAPTCHA rates and adjust patterns before damaging IP reputation.

- Log everything: store status codes, proxy identifiers, and response metadata to help debug accuracy issues.

ResidentialProxy.io typically provides analytics and controls to help you enforce these practices and keep your operations sustainable.

Conclusion: Residential Proxies as a Core Accuracy Tool

In 2026, residential proxies are no longer a niche upgrade; they are foundational to any scraping operation that cares about accuracy. By reducing blocks, revealing true geo-specific content, minimizing bot-detection artifacts, and supporting stable sessions, they directly improve the completeness, consistency, and reliability of scraped data.

Paired with disciplined scraping practices and platforms like ResidentialProxy.io, residential networks turn web scraping from a fragile, whack-a-mole exercise into a dependable data pipeline that closely reflects how real users experience the web.